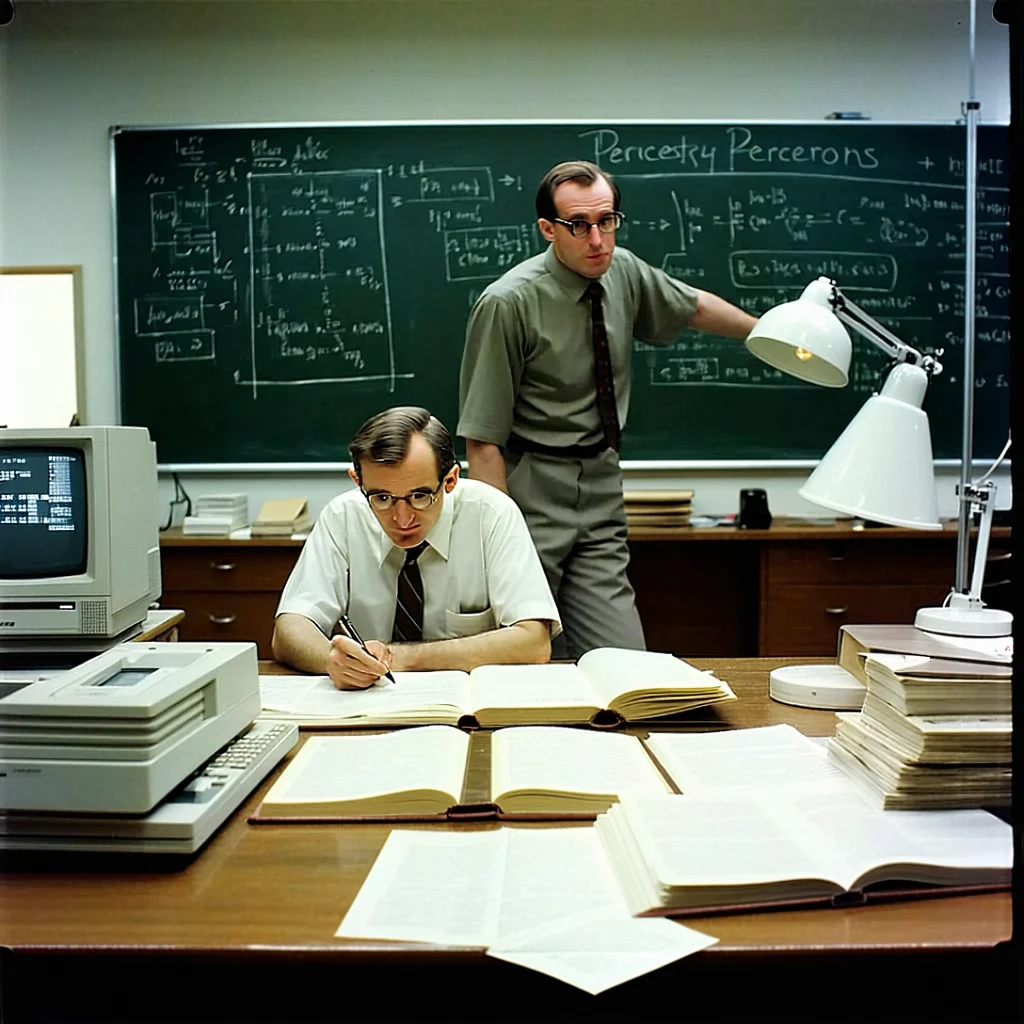

Minsky & Papert Publish "Perceptrons"

Marvin Minsky and Seymour Papert publish "Perceptrons: An Introduction to Computational Geometry" (MIT Press, 258 pp., ISBN 0-262-13043-2), mathematically proving that single-layer perceptrons cannot compute the XOR function or any linearly non-separable predicate. The book is a rigorous critique of Frank Rosenblatt's perceptron — Minsky's classmate from the Bronx High School of Science (class of 1946) and intellectual rival in a debate that embodied the fundamental schism in AI between symbolic reasoning and connectionism. While the proofs applied strictly to single-layer networks, the book was broadly interpreted as a death sentence for neural network research altogether. Funding from DARPA, the Office of Naval Research, and other agencies dried up almost overnight. The field entered what would later be called the "AI winter" for connectionism — a 17-year exile during which neural networks were considered a discredited approach and only a handful of researchers (notably Geoffrey Hinton, who would co-author the 1986 backpropagation paper in Nature demonstrating that multi-layer networks with hidden layers could learn exactly the representations Minsky and Papert had shown were impossible for single-layer architectures) continued the work. Rosenblatt himself died in a boating accident on Chesapeake Bay in 1971, just two years after publication, never seeing the vindication of his connectionist vision. The book was reissued in an expanded edition in 1988 with an epilogue acknowledging multi-layer networks, and again in 2017 with a foreword by Léon Bottou. Cited as both the most important and most damaging book in AI history, "Perceptrons" inadvertently delayed the deep learning revolution by nearly two decades — but its rigorous framing of the problem also defined exactly what needed to be solved.